Welcome back. Today we're stepping into newer territory: exploring if, how, when, and where GenAI actually belongs in Data and Analytics.

One of the biggest splits in the data community right now is about Large Language Models (LLMs). Some are excited. Some are wary. Some think the idea of using an LLM on real data is a joke. Others are already doing it successfully.

One key sticking point is that LLMs are non-deterministic. (Privacy is another, but I'll save that for a future edition.)

What does 'non-deterministic' mean?

Here's a simple example. I asked an LLM to "write a haiku about data" twice:

Same input. Different outputs. Neither is "wrong," but neither is repeatable either.

Totally understandable that this is not what we want for our reporting numbers.

In BI and analytics, determinism is a given.

- Run a dashboard filter today → you'll get the same numbers tomorrow.

- Query a table twice → identical results.

That consistency is the bedrock of trust. Without it, adoption collapses.

So if you've spent your career ensuring dashboards always give the right number, the idea of mixing LLMs with data feels like chaos. How do I trust a number if the model might make it up?

Enter the Semantic Layer

A semantic layer brings determinism back into the picture.

It defines:

- Metrics

- Dimensions

- Business logic

all in one place.

Instead of every tool or analyst defining "revenue" differently, the semantic layer enforces one definition everywhere. The joins are set. The aggregation types are pre-defined.

No matter whether you're querying from a dashboarding tool, Excel, or through an AI assistant, the meaning and calculation of "customer" or "net sales" or "conversion rate" is consistent. The randomness of the LLM doesn't change the underlying truth.

I've got a future edition planned to look at some of the semantic layers out there and the capabilities they provide. For this conversation, we will stick with a light-weight definition. Please join the discussion if you want more clarity on this front.

MCP Servers: Guardrails for AI

Model Context Protocol (MCP) servers take this a step further.

They act as a translator between LLMs and structured data.

- The LLM can only ask questions against the schema and semantics you expose.

- It is constrained to safe, machine-readable context.

In practice, this means the model cannot invent random SQL. It has to choose from the valid queries and fields you have already defined. It is like giving the AI a menu instead of free rein in the pantry.

Note here: MCPs are new. To me they are a bit like my plug-in hybrid electric car. I can plug it in and it works. I get the high level theory of how but don't ask me to explain the mechanics. And that's fine, I don't need to be an electrical engineer to drive it.

Together: Trust + Flexibility

- Semantic layers ensure consistent meaning.

- MCP servers ensure safe access.

- Together, they bridge deterministic BI with probabilistic AI.

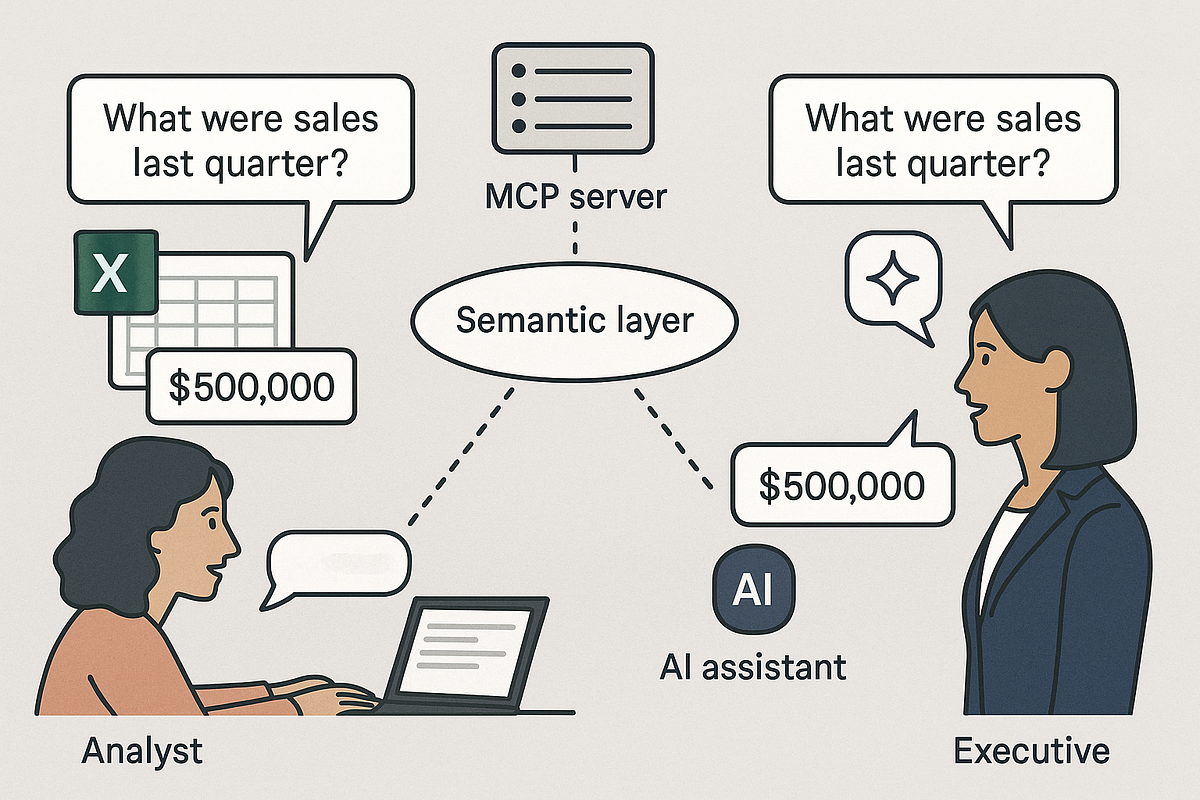

Picture this: an analyst asks in Excel, "What were sales last quarter?" and an executive asks the same question via an AI assistant. The AI assistant, constrained by the MCP server, hits the same semantic layer as the Excel query. Both get the same number.

That's the key distinction: the SQL generated and the number it returns are deterministic. The wording of the LLMs response may change, but the underlying result does not.

The non-determinism does not disappear, but it is bounded and the risk is managed.

In many ways, semantic layers and MCP servers are becoming part of the modern governance layer for AI. They don't replace the human side of governance (clear roles, accountability, and policies) but they do make technical guardrails real.

It always comes back to value.

So technically, we can make LLMs safe enough to query structured data. Then the question is… Why would you?

I'm seeing plenty of use cases that confuse movement with progress. If you can already get the answer from a dashboard or an existing Slack integration, what is different? Is the ease of natural language worth the compute and migration cost? Does the value only show up when you start automating decisions and workflows?

Semantic layers and MCP servers make the conversation possible. Which means it's time to move the conversation to value, not just feasibility.

If you're considering LLMs in analytics, ask these questions before you start:

- What's the use case? What business decision will be different? Getting an answer isn't enough. Be clear on which decision or workflow will actually change because of it, and how that change contributes to business success.

- Do we have a semantic layer in place for that use case? Without one, you're not enforcing consistent definitions. You'll be surfacing inconsistencies, not clarity.

- Can we expose it safely? Whether through MCP servers or another controlled interface, the LLM needs guardrails to keep queries valid and secure.